Day 2 at Google Cloud Next 2023

Security and network visibility

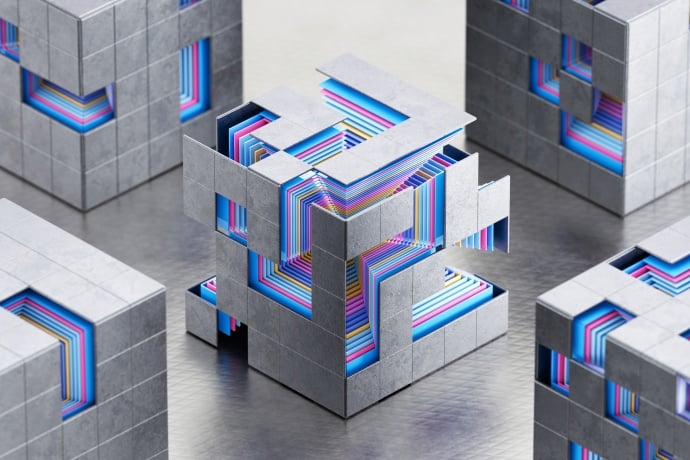

More Complexity, More Breaches

While Generative AI has dominated discussions at the Google Cloud Next Conference, security and threat mitigation strategies were close behind.

The challenge has become particularly fraught as customers scramble to outfit their infrastructure with different products and different security policies – a state of affairs that has also piqued the attention of threat actors.

“The biggest enemy is complexity,” noted Alex Au Yeung, Symantec’s chief product officer at Symantec by Broadcom. He added that in a post-pandemic world that hasn’t quite returned to normal, threats continue to grow.

In particular, the shift to a more hybrid world presents a host of new security challenges for organizations – including the likelihood that threat actors will likely deploy Gen AI to launch more sophisticated malware, he said.

The biggest enemy is complexity.

But Symantec, which has been working with AI and Machine Learning for the last couple of decades, is also investigating ways to blunt threat actors and make it easier for customers to enforce their security policies.

You can also read more about how Symantec is working with Google Cloud to deliver customer-focused innovations that will simplify the deployment, management, and maintenance of secure, Zero Trust access to private applications and corporate resources.

Is Better Network Visibility Possible?

Broadcom also presented on what they are doing to improve network visibility on day 2 of Google Cloud Next. Broadcom presenters, Michael Melillo, Senior Director network management, and Mike Hustler, Head of Engineering for Appneta, flashed a Gartner statistic on screen sure to excite the interest of the engineering-heavy crowd that turned out to listen to them. By 2024, Gartner predicted, nearly 60% of IT spending on application software will be directed toward cloud technologies.

That’s the good news. The not-so-good news: IT operations teams are having a bear of a time managing and securing their cloud data as it spreads across a variety of hybrid and multi-cloud environments.

Melillo and Hustler had some recommendations for their audience on network visibility. Explaining that it starts with the recognition that networks are constantly in a state of transformation. The magnitude of the challenge is compounded by the fact that enterprises are often forced to operate in a dynamic environment that involves internal data as well as third-parties outside of their control. On top of that, the rise of hybrid and remote work means that users are changing locations more than ever before.

That puts the onus on IT Ops teams to adopt measures that expands their visibility into network applications, traffic flows, and distributed locations to better manage cloud workflows and maintain good end-user experience.

So what can you do?

Start with a short to-do list:

- Decide what data is important. Understand end-to-end capacity and how your apps may be impacting connections.

- Know where network issues are cropping up. That’s the best way to understand who responsible for fixing the problem.

- Stay proactive. Continuous alert monitoring always leads to better results than simply identifying that something is not your fault.

As Melillo, noted, a lot of people are making network decisions. That also means that everyone inside the organization will – or should – share some level of accountability if they’re going to tame the growing complexity of their operations and deliver a top-notch network experience.

We encourage you to share your thoughts on your favorite social platform.