Quantity Does Not Equal Quality

Symantec Enterprise – a powerful ally for our customers

The MITRE ATT&CK® Evaluation provides powerful data for those who can put the results into the context of their networks and the needs of their SOCs. MITRE does not declare a winner, and instead simply encourages participation. Unfortunately, many vendors have seized a single raw result having some chart topping attribute and declared it a proof point. That proof point is then used to turn a report that is an evaluation into a contest and to declare themselves victors. Without real world context this is a hollow victory, or as some may call it: Marketing!

The MITRE ATT&CK® Evaluation results give us as vendors a solid idea of how we can get better. It gives customers a view of the ability of their product to defend them against these threats. And it can help a customer compare products, but not without judging the results within the context of usability, complexity and required customization. Declaring a product better based only on quantity of alerts, for example, means quality is not considered. And likely the “winner” is a product that is noisy and unusable.

The MITRE ATT&CK® Evaluation results give us as vendors a solid idea of how we can get better.

For instance, not all detections are equal. Especially detections that depend on the SOC to create them. The MITRE ATT&CK® Evaluation allows you to add customized detection. No disagreement here. But some vendors have very high expectations of their customer’s ability to write custom scripts. What is not measured is the effort to write these scripts. Can anyone in the SOC create this script, or does it require an advanced degree or extensive training? Vendors who rise to the top of the results by depending on the SOC to write complicated scripts have really just shifted the burden of detection to the customers they are claiming to protect… who is providing the value here?

So, can you use the evaluation to tell which product is best? This is probably the wrong goal. But you can get an excellent idea of how good a product is in a realistic attack scenario. Let’s look at alerts. On the surface it seems like the more alerts you produce on potential attacks the better. But anyone working in a SOC knows that more is not better when it comes to alerts. Especially when the vendor alerts on all instances of a file being compressed, or every PowerShell command being executed. Just looking at all these alerts would overwhelm a SOC. No one has the resources to look into that many alerts.

But anyone working in a SOC knows that more is not better when it comes to alerts.

Of course having the fewest alerts is probably not a good thing either. One way to look at these results is that most vendors are too hot or too cold. The soup is best when the temperature is just right.

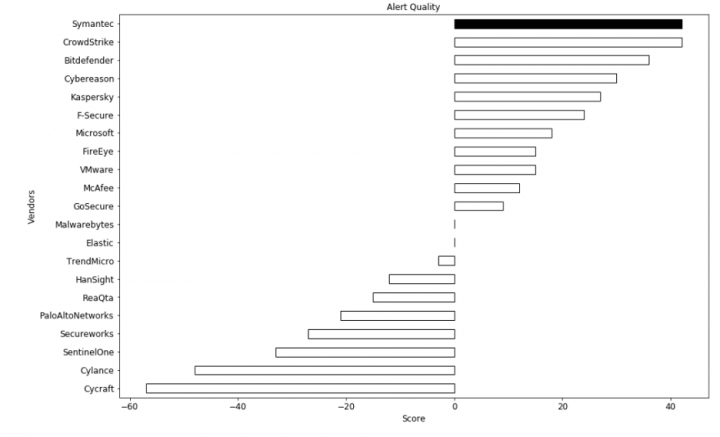

We’ve tried to create a model to help understand the results. It’s not who has the most alerts, or the least. It’s the vendors that have them just right. We want to measure quality of alerts, not quantity.

We went through and gave a value to alert types. We gave a high value to critical techniques alerted on. Things like: Credential Dumping, UAC Bypass, Exfiltration, Service Execution, and Persistence. We used a score of 3+. You might want to use a higher or lower score.

For alerts that did not indicate a critical technique being used to further an attack, things like File and Directory Discovery, Commonly Used Ports, Query Registry and File Deletion, we gave a -3. Again, you should set the score to what you feel is right. But given our years of threat hunting experience these seemed just right to us.

Now we have a measurement of the effectiveness of our products. And we know we are on the right track with our product strategy. We are building high quality detections that are actionable, that help focus the SOC on events that are important and giving them the tools to analyze and respond. That’s our goal, being powerful allies to the SOC, not giving them more work. Our focus is not winning these “marketing” exercises, but rather have our customers be the winners.

If you’d like to try this for yourself, adjust the numbers, or use this model for other parts of the evaluation results the script can be downloaded here

Why MITRE ATT&CK Matters

This collaborative framework offers defenders a common language to talk about tactics and techniques to foil Advanced Persistent Threats

We encourage you to share your thoughts on your favorite social platform.